Study overview

This investigation focuses on evaluating the reliability and readability of five AI chatbots that are designed to offer guidance and advice on concussion-related health issues. With the increasing integration of artificial intelligence into healthcare communication, understanding these tools’ effectiveness is imperative, especially in providing accessible and accurate information to users. The primary objective of the study is to analyze how well these AI systems perform in offering reliable information while maintaining clarity and readability for users, including patients and caregivers.

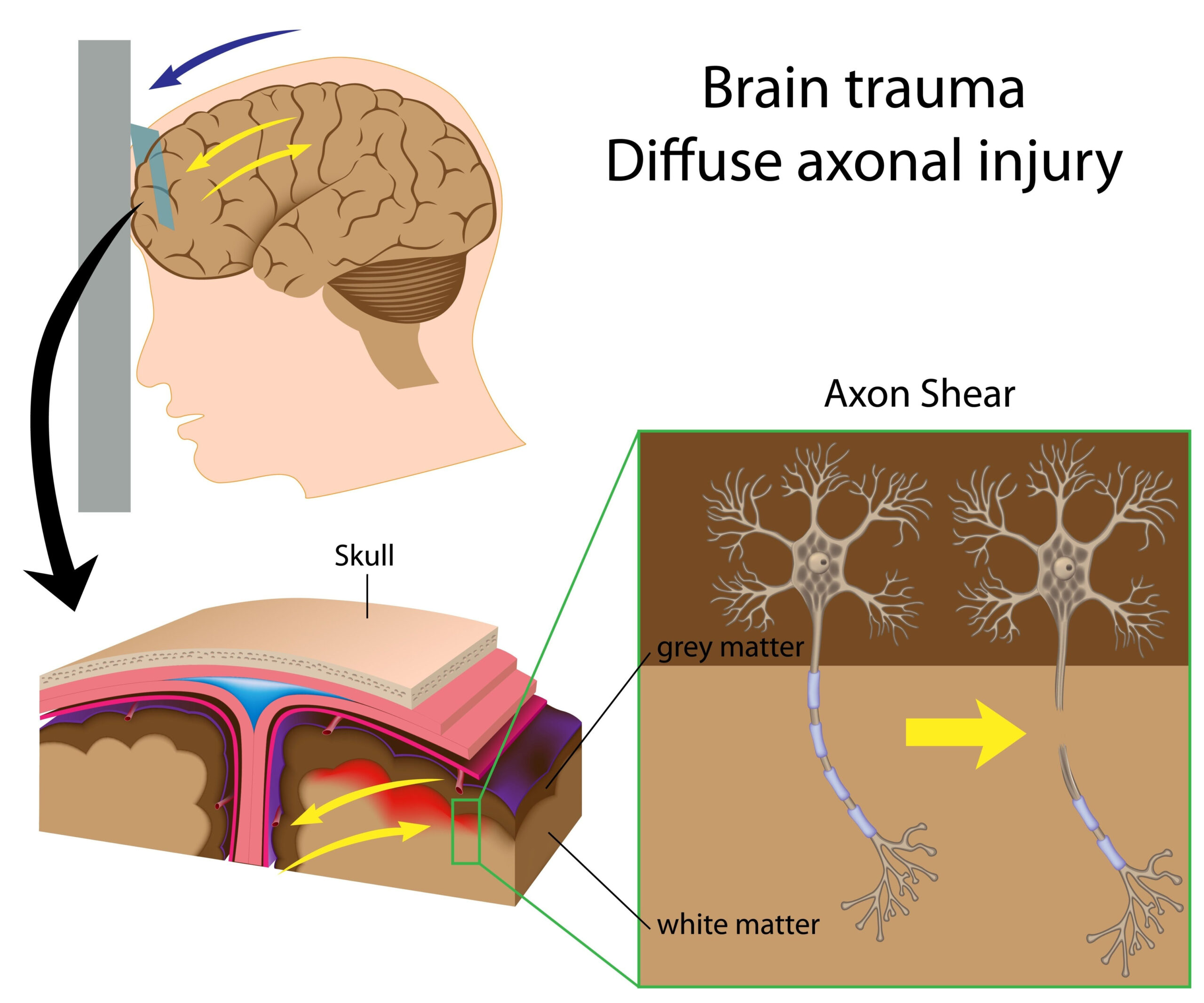

Concussions are common traumatic brain injuries, and effective communication of their management and symptoms is pivotal for ensuring optimal health outcomes. Various AI chatbots have emerged as innovative platforms for disseminating health advice, yet their quality and trustworthiness have not been extensively evaluated. Therefore, this study aims to bridge that gap by comparing several prominent AI models to determine their performance in delivering concussion health information.

The study adopted a systematic approach to select the chatbots, which were assessed based on their ability to respond accurately to commonly asked questions regarding concussions. The evaluation encompassed not only the factual accuracy of the information provided but also the readability levels to ensure that the content is understandable to a broad audience. By doing so, the study addresses the critical need for reliable health communication through artificial intelligence tools that facilitate better patient education and care.

Methodology

The study employed a multi-faceted approach to assess the performance of five distinct AI chatbots, focusing specifically on their reliability in providing accurate concussion health information and the readability of their responses. Initially, researchers conducted a comprehensive literature review to identify the most commonly posed questions related to concussions. This process helped to ensure that the evaluation metrics were aligned with real-world inquiries from patients, caregivers, and health professionals.

To select the chatbots for evaluation, the researchers identified five widely used AI platforms that are known for offering health-related information. These platforms included a mix of retrieval-augmented models, which access external databases for information, and pre-trained models that rely on a fixed dataset to generate responses. This selection allowed for a diverse comparison of chatbot functionalities, ensuring a thorough understanding of the technologies involved.

Each chatbot was then queried with a standardized set of questions concerning concussions, which covered various aspects such as symptoms, management strategies, recovery timelines, and when to seek medical attention. The chatbots’ responses were collected and analyzed for their content accuracy, which involved cross-referencing the information provided with established medical guidelines and resources from reputable health organizations such as the Centers for Disease Control and Prevention (CDC) and the American Academy of Neurology.

In measuring reliability, a scoring system was developed to determine the factual correctness of each response. Points were allocated based on the completeness and accuracy of the information, with higher scores awarded to those responses fully aligned with current clinical recommendations. This scoring not only evaluated factual correctness but also looked at the relevance of the information to the specified questions.

Readability assessments were conducted using established readability formulas, including the Flesch-Kincaid Grade Level and Gunning Fog Index. These tools provided a quantitative measure of how easily the information could be understood by the general population. The results were categorized into different levels of readability (e.g., suitable for elementary school students, high school students, etc.), enabling the researchers to recognize which chatbot produced the most accessible content for users with varying educational backgrounds.

Statistical analysis was then performed to compare the chatbots based on their scoring in reliability and readability. Factors such as the mean scores, standard deviations, and inter-chatbot variability were examined to draw meaningful conclusions about the performance of each chatbot across both dimensions. This rigorous methodology aimed to ensure that the results would provide a clear picture of how these AI systems function in the realm of health communication specifically concerning concussion advice.

Key findings

The evaluation of the five AI chatbots revealed a range of performances regarding their reliability in delivering accurate concussion health advice and their readability for various audiences. Results indicated that some chatbots excelled in accuracy, often providing comprehensive and up-to-date information, while others struggled with factual correctness and clarity, which could hinder user understanding in critical situations.

In terms of reliability, the scoring system highlighted that one particular chatbot, which employed a retrieval-augmented methodology, consistently provided information that resonated with established medical guidelines. This chatbot scored significantly higher than its counterparts, particularly in categories concerning the management of concussion symptoms and recovery timelines. Notably, responses that aligned accurately with CDC and American Academy of Neurology recommendations reflected an understanding of best practices, underscoring the chatbot’s potential as a reliable source.

Conversely, another chatbot, utilizing a pre-trained model, displayed shortcomings in accuracy. It occasionally provided outdated information or failed to address the complexities of concussion management, particularly in cases requiring immediate medical attention. This disparity highlights the importance of continuous updates and monitoring in AI systems to ensure that users receive the most current and relevant advice regarding health matters.

<pReadability assessments revealed a concerning trend. While some chatbots attained higher accuracy scores, their readability was not necessarily commensurate. For instance, the chatbot with the best reliability had responses that were rated at a college reading level, potentially alienating a significant segment of the population, including those with lower educational backgrounds or limited health literacy. Other chatbots produced more accessible content, with some responses rated at a middle school level, making essential health information easier to digest for younger users and caregivers.

Statistical analysis of chatbot performances further underscored the mixed outcomes in reliability and readability. The mean reliability scores varied substantially, with discrepancies of up to 15 points between the top-performing and lowest-performing chatbots. These findings emphasize the need for users to approach AI-generated health advice with caution, recognizing that the effectiveness of these tools can greatly differ depending on the platform used.

Additionally, variability in readability scores indicated that while some chatbots aimed for accessibility, the depth of information sometimes diminished in simpler explanations. This could lead to oversimplifications or a lack of critical detail necessary for users to fully understand their condition.

Overall, these findings not only highlight the potential of AI chatbots as supplementary resources in concussion health advice but also underscore a crucial need for ongoing evaluation and refinement. In an era where technology and health intersect increasingly, ensuring that AI tools communicate effectively is essential in safeguarding patient understanding and care.

Clinical implications

The analysis of the findings underscores the pressing need for healthcare providers and developers of AI-based systems to prioritize clarity and reliability in the information conveyed by these chatbots. Given the pivotal role that accurate concussion information can play in the prevention of further injury and promoting effective recovery, it is critical that users can trust the advice provided by such systems. With the varying performances noted, stakeholders must recognize the importance of integrating feedback mechanisms that can enhance the content quality, ensuring it aligns with the latest clinical practices.

Moreover, the evident disparities in readability levels serve as a reminder that health information must be tailored for diverse audiences, including individuals with varying educational backgrounds and health literacy. For instance, while technical terms may resonate well with healthcare professionals, they can create barriers for laypersons seeking to understand essential health issues. AI chatbots that cater to a wider range of reading levels can empower users to make informed decisions, especially in emergencies where quick comprehension is vital.

Furthermore, the implications extend beyond individual patient interactions. The healthcare ecosystem is increasingly relying on digital communication tools, making it essential for institutions to ensure these technologies work effectively. The insights gained from this study can guide future enhancements in AI chatbot design, promoting a model where continuous learning and updating are integral components of the system. This can also facilitate the incorporation of feedback from users, which can be instrumental in improving both the accuracy and the readability of information shared by these systems.

As the integration of artificial intelligence continues to evolve in the health sector, a collaborative approach involving healthcare professionals, AI developers, and regulatory bodies will be necessary. Such collaboration can help in standardizing best practices for content validation, ensuring that AI chatbots operate not only as efficient informational tools but also as trustworthy companions in patient care. By addressing the dual challenges of reliability and readability, the goal of equitable access to health information for all, regardless of background, can be achieved.